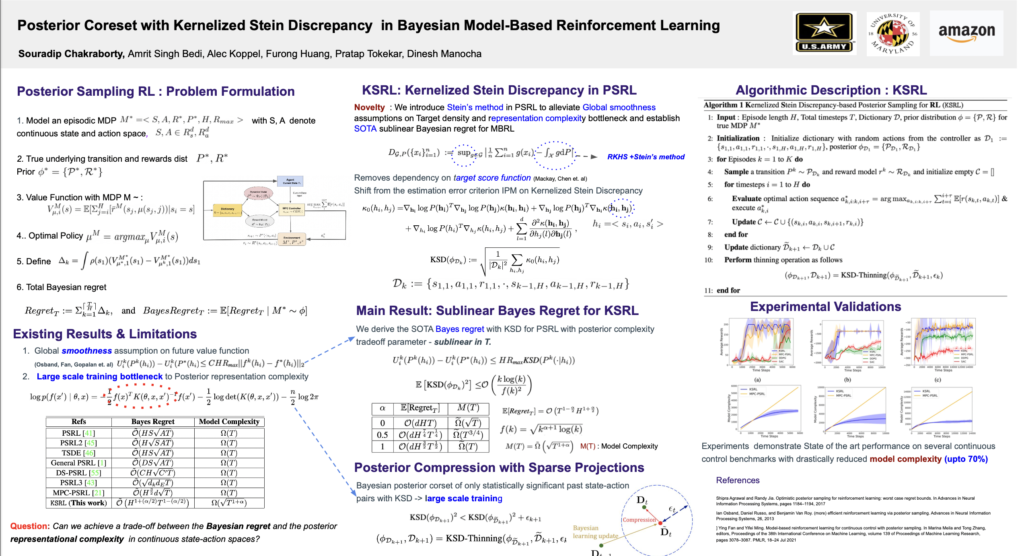

VIIII. Model-Based Reinforcement Learning

Souradip Chakraborty · Amrit Bedi · Alec Koppel · Furong Huang · Pratap Tokekar · Dinesh Manocha, “Posterior Coreset Construction with Kernelized Stein Discrepancy for Model-Based Reinforcement Learning”, Workshop on Score-Based Methods at Neural Information Processing System (NeurIPS), 2022. Paper Link, Workshop Link Fri 2 Dec, 8:50 a.m. CST Room 293 – 294, Poster session 10-11 am, 3-4 pm CST

Model-based reinforcement learning (MBRL) exhibits favorable performance in practice, but its theoretical guarantees are mostly restricted to the setting when the transition model is Gaussian or Lipschitz and demands a posterior estimate whose representational complexity grows unbounded with time. In this work, we develop a novel MBRL method (i) which relaxes the assumptions on the target transition model to belong to a generic family of mixture models; (ii) is applicable to large-scale training by incorporating a compression step such that the posterior estimate consists of a \emph{Bayesian coreset} of only statistically significant past state-action pairs; and (iii) {exhibits a Bayesian regret of O(dH1+(α/2)T1−(α/2)) with coreset size of Ω(T1+α), where d is the aggregate dimension of state action space, H is the episode length, T is the total number of time steps experienced, and α∈(0,1] is the tuning parameter which is a novel introduction into the analysis of MBRL in this work}. To achieve these results, we adopt an approach based upon Stein’s method, which allows distributional distance to be evaluated in closed form as the kernelized Stein discrepancy (KSD). Experimentally, we observe that this approach is competitive with several state-of-the-art RL methodologies, and can achieve up to 50% reduction in wall clock time in some continuous control environments.